The challenge:

We faced the challenge of migrating a Drupal application from a VPS, which was under constant high load and thus underperforming, to AWS. Our goal was to develop a scalable solution and consolidate all components of the platform in the same infrastructure. For this project, Drupal was merely used as backend and queried by a frontend application through the JSON API, as well as for assets like images and static JSON files.

The main reason the existing solution was underperforming was because all components (Nginx, MariaDB, SOLR, Redis) were all running on one VPS. The only possibility was to scale vertically, while ideally, you would scale horizontally.

As a consequence the frontend application, serving thousands of visitors a day, suffered poor backend performance. This was partially fixed by caching in the frontend application, but as this is a legacy application which is being rebuilt as we speak, this isn’t optimal.

The solution:

As mentioned the entire application is currently being rebuilt and all new components will be hosted in AWS. Therefore, also the Drupal backend also needed to be migrated to the new infrastructure in AWS.

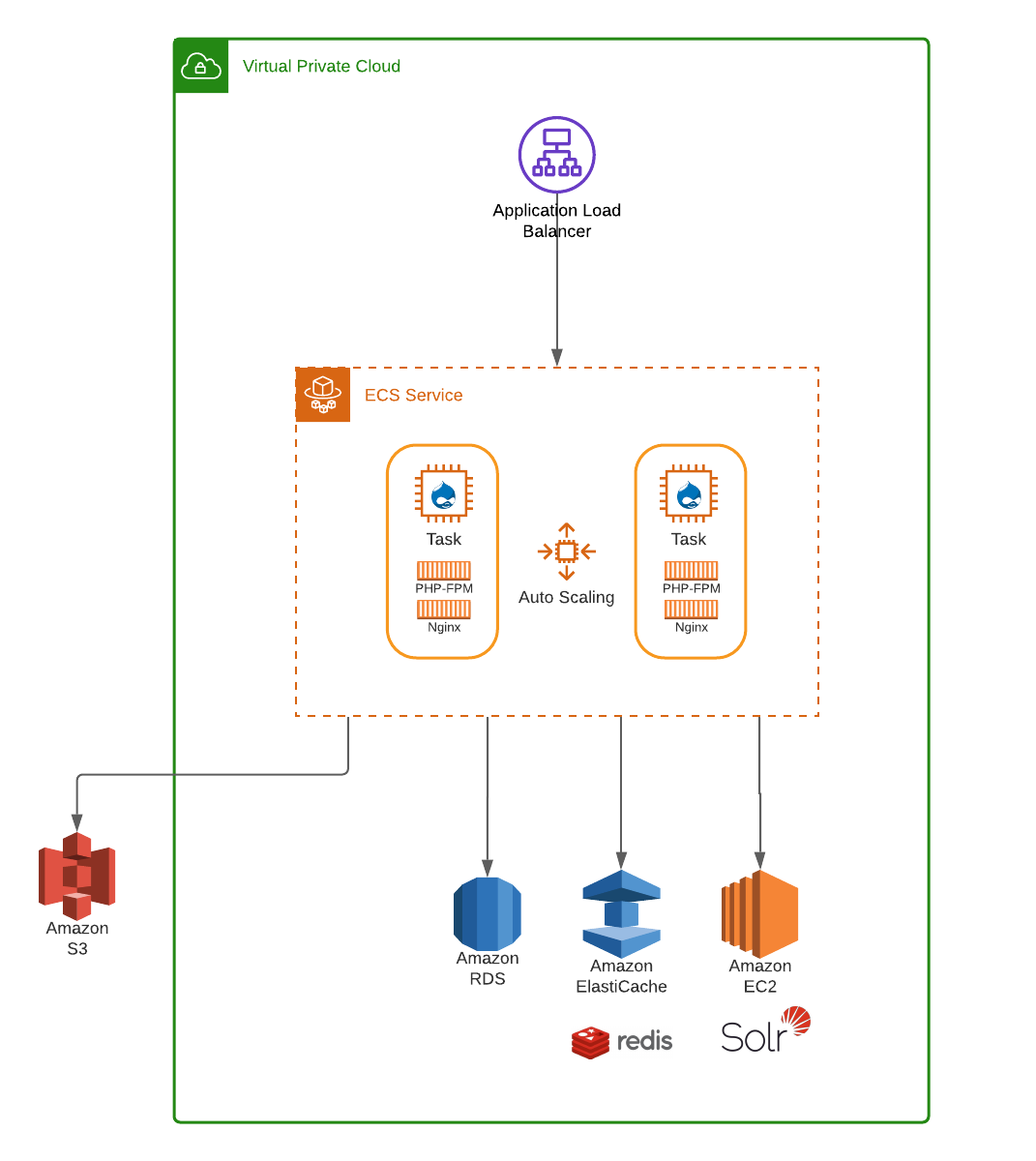

We started of by decoupling all components and ended with the following architecture, where we can scale out (and scale in) on component level:

For the Drupal application itself we choose for the AWS Elastic Container Service (ECS) using Fargate. We configured it to use an Application Load balancer (ALB) to distribute traffic evenly across the tasks in our service. By using Docker images, we can use the same configuration for development on our local machines as for running the application on production. Of course only after it ran successfully through our CI/CD pipeline.

Our setup uses 2 containers, one for Nginx and one for PHP-FPM. Both run in a task within the Fargate service, which we configured for autoscaling. If the CPU or memory exceeds a certain threshold we scale out automatically and after a cooling down period, we scale back in.

The other components are also migrated to AWS making use of the services AWS offers as much as possible:

- For caching we use AWS ElastiCache with a Redis engine as a backend

- As a search engine we use SOLR, because of its great performance and the available Drupal module. The existing frontend also relies on this component. For now we migrated it to an EC2 instance, but we have plans to make it scalable later on.

- The database is migrated to AWS Aurora.

- All static files are stored in S3, which we achieved using the Drupal module S3 File System.

Using this setup we ensure that state is kept in services like ElastiCache and RDS, so the application can scale in and out without losing any data.

Drush and cron commands:

If you ever worked with Drupal you probably used Drush commands and it’s almost inevitable to use cronjobs. We solved this by setting up a separate Docker image using the same Drupal application, which we can run in a Fargate service.

This service doesn’t have any tasks running by default, but these can be triggered by running a pipeline in Bitbucket where you can specify the Drush command you want to run.

The same goes for cron, for which we scheduled an hourly pipeline executing all cronjobs.

Tips:

- If you make use of the JSON API, make sure you pay attention that caching works properly by checking the network tab within your browser’s developer tools. When logged in the response header ‘x-drupal-dynamic-cache’ should have the value HIT, while for anonymous users the response header ‘ x-drupal-cache’ should have the value HIT. There are several reasons why you could encounter a MISS on these response headers, but usually it’s because of misconfiguration of one of the components. In our case error messages were displayed by an incorrect setting in Drupal on the ”/admin/config/development/logging” page.

- Make use of Infrastructure as Code. We work with CDK which works pretty well for us (refer to other blog post).

- When you make use of a dedicated EC2 instance, make sure to use a reserved instance. This will save you a lot of money.

- For migrating assets to S3 make sure to use the AWS CLI for synchronization of these assets. This works very fast and you can run it recursively, which is very useful during a migration.

- Create a dashboard in Cloudwatch to be able to monitor the performance of your platform.